- Blog

- Mk muzika mp3 download

- Best cd dvd blu ray burning software

- Nvidia gpu download page

- Video copilot element 3d 2-2 crack

- Download hulu app to sony blu ray player

- Fury movie download hd

- Truecaller app reviews

- 2pac and snoop dogg songs

- Budget small gaming laptop

- All horus heresy novels in order

- Patch crack cubase 8-5

- Powerpoint mac increase font size

- X men apocalypse free to watchputlockers

- Best free photo editing software for pc and beginners

Review the release notes and the documentation for install instructions on supported distributions and platforms. & sudo dnf install -y datacenter-gpu-manager You can either DCGM install directly from the CUDA network repos or download the installer packages below. Archived Releasesīy downloading the using the software, you agree to fully comply with the terms and conditions of the NVIDIA DCGM License.

& sudo dnf install -y datacenter-gpu-manager Set up the DCGM service Install DCGM $ sudo dnf clean expire-cache \ Set up the CUDA network repository meta-data, GPG key $ sudo dnf config-manager -add-repo & sudo apt-get install -y datacenter-gpu-manager Red Hat $ sudo mv cuda-ubuntu2004.pin /etc/apt/preferences.d/cuda-repository-pin-600 Set up the CUDA network repository meta-data, GPG key $ wget Older DCGM releases are also available from the repos.

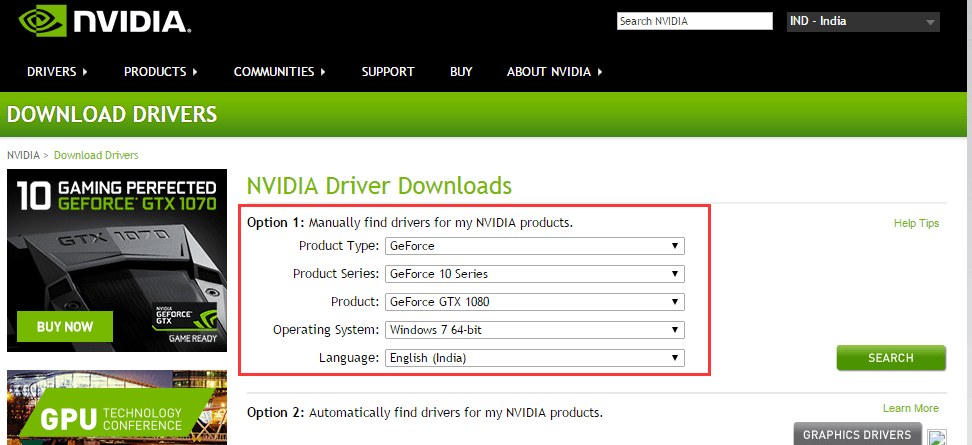

NVIDIA GPU DOWNLOAD PAGE DRIVER

Note that it is recommended to use the latest R450+ NVIDIA datacenter driver that can be downloaded from NVIDIA Driver Downloads page.Īs the recommended method, install DCGM directly from the CUDA network repos.

DCGM supports Linux operating systems on x86_64, Arm and POWER (ppc64le) platforms. It can be used standalone by infrastructure teams and easily integrates into cluster management tools, resource scheduling and monitoring products from NVIDIA partners.ĭCGM simplifies GPU administration in the data center, improves resource reliability and uptime, automates administrative tasks, and helps drive overall infrastructure efficiency. It includes active health monitoring, comprehensive diagnostics, system alerts and governance policies including power and clock management. NVIDIA Data Center GPU Manager (DCGM) is a suite of tools for managing and monitoring NVIDIA datacenter GPUs in cluster environments. Nvidia is expected to unveil the Hopper architecture GPU at GTC 2022, where it will be detailed.Manage and Monitor GPUs in Cluster Environments While HPC will use the standard technique, DL will make use of a vast independent cache that will be coupled to the graphics processor. With two separate designs based on the same architecture, Nvidia will supply two distinct solutions: one for high-performance computing (HPC) and another for deep learning (DL). In prior reports, it was stated that NVIDIA's GH100 would be manufactured utilizing 5-nanometer technology and would have a die size of close to 1000 mm2, making it the world's largest graphics processing unit.įurthermore, according to reports, Nvidia has developed what is called the COPA solution, based on the Hopper architecture. A COPA-GPU is a domain-specialized composable GPU architecture capable to provide high levels of GPU design reuse across the #HPC and #DeepLearning domains, while enabling specifically optimized products for each domain. GH100, NVIDIA's next-generation data center "Hopper" GPU, according to recent rumors from Chip Hell, will have some truly mind-blowing specs, according to the publication.Īs claimed by user zhangzhonghao, the transistor count of this GPU will be 140 billion, an astounding number that exceeds current flagship data center GPUs such as AMD's Aldebaran (58.2 billion transistors) and NVIDIA's GA100 (58.2 billion transistors), respectively (54.2 billion transistors).